Experiments and Observational Studies

890 likes | 1.61k Views

Experiments and Observational Studies. Chapter 13. Objectives:. Observational study Retrospective study Prospective study Experiment Experimental units treatment response Factor Level. Principles of experimental design Statistically significant Control group Blinding Placebo

Experiments and Observational Studies

E N D

Presentation Transcript

Experiments and Observational Studies Chapter 13

Objectives: • Observational study • Retrospective study • Prospective study • Experiment • Experimental units • treatment • response • Factor • Level • Principles of experimental design • Statistically significant • Control group • Blinding • Placebo • Blocking • Matching • Confounding

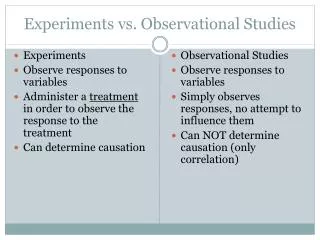

Observes individuals and records variables of interest but does not attempt to influence the response (does not impose a treatment). • Allows the researcher to directly observe the behavior of interest rather than rely on the subject’s self-descriptions (survey). • Allows the researcher to study the subject in its natural environment, thus removing the potentially biased effect of the unnatural laboratory setting on the subject’s performance (animal behavior). Observational Study

Two Types • Field Observation – Observations are made in a particular natural setting over an extended period of time. • Systematic Observation – Observations of one or more particular behaviors in a specific setting. • Since Observational Studies do not impose a treatment it is not possible to prove a cause-and-effect relationship with an observational study. Observational Study

Example: • Researchers compared the scholastic performance of music students with that of non-music students. The music students had a much higher overall grade point average than the non-music students, 3.59 to 2.91. Also, 16% of the music students had all A’s compared with only 5% of the non-music students. Observational Study

In an observational study, researchers don’t assign choices; they simply observe them. • The example looked at the relationship between music education and grades. • Since the researchers did not assign students to get music education and simply observed students “in the wild,” it was an observational study. • Because researchers in the example first identified subjects who studied music and then collected data on their past grades, this was a retrospective study. Observational Study

Observational studies that try to discover variables related to rare outcomes, such as specific diseases, are often retrospective. They first identify people with the disease and then look into their history and heritage in search of things that may be related to their condition. • Retrospective studies have a restricted view of the world because they are usually restricted to a small part of the entire population. • Because retrospective studies are based on historical data, they can have errors. • Do you recall exactly what you ate yesterday? How about last Monday? Retrospective Study

A somewhat better approach to a observational study, then using historical data such as in a retrospective study, is to identify subjects in advance and collect data as events unfold. This called a prospective study. • In our example studying the relationship between music education and grades, had the researchers identified subjects in advance and collected data over an entire school year or years, the study would have been a prospective study. Prospective Study

Observational studies are valuable for discovering trends and possible relationships. • However, it is not possible for observational studies, whether prospective or retrospective, to demonstrate a cause and effect relationship. There are too many lurking variables that may affect the relationship. Observational Study

Definition: Experiment – deliberately imposes some treatment on individuals in order to observe their responses. • Basic Experimental Design • Subject Treatment Observation • The purpose of an experiment is to reveal the response of one variable to changes in other variables, the distinction between explanatory and response variables is essential. Experiment

An experiment is a study design that allows us to prove a cause-and-effect relationship. • In an experiment, the experimenter must identify at least one explanatory variable, called a factor, to manipulate and at least one response variable to measure. • An experiment: • Manipulates factor levels to create treatments. • Randomly assigns subjects to these treatment levels. • Compares the responses of the subject groups across treatment levels. Experiment

In an experiment, the experimenter actively and deliberately manipulates the factors to control the details of the possible treatments, and assigns the subjects to those treatments at random. • The experimenter then observes the response variable and compares responses for different groups of subjects who have been treated differently. Experiment

In general, the individuals on whom or which we experiment are called experimental units. • When humans are involved, they are commonly called subjects or participants. • The specific values that the experimenter chooses for a factor are called the levels of the factor. • A treatment is a combination of specific levels from all the factors that an experimental unit receives. Experiment

Experimental Units • The individuals or items on which the experiment is performed. • When the experimental units are human beings, the term subject is often used in place of experimental unit. • Response variable • The characteristic of the experimental outcome that is being measured or observed. Review - Experimental Terminology

Factor • The explanatory variables in an experiment. • A variable whose effect on the response variable is of interest in the experiment. • Levels • The different possible values of a factor. Review - Experimental Terminology

Treatment • A specific experimental condition applied to the units of an experiment. • For one-factor experiments, the treatments are the levels of the single factor. • For multifactor experiments, each treatment is a combination of the levels of the factors. Review - Experimental Terminology

Researchers studying the absorption of a drug into the bloodstream inject the drug into 25 people. 30 minutes after the injection they measure the concentration of the drug in each person’s blood. • Identify the; • Experimental units. • Response variable. • Factors. • Levels of each factor. • Treatments. Example:

Researchers studying the absorption of a drug into the bloodstream inject the drug into 25 people. 30 minutes after the injection they measure the concentration of the drug in each person’s blood. • Experimental units • Subjects, the 25 people injected • Response variable • Concentration of the drug in the blood • Factors • Single factor – the drug • Levels • One level – the dose • Treatment • Injecting the drug Answer:

Weight gain of Golden Torch Cacti. Researchers examined the effects of a hydrophilic polymer and irrigation regime on weight gain. For this study the researchers chose the hydrophilic polymer P4. P4 was either used or not used, and five irrigation regimes were employed: none, light, medium, heavy, and very heavy. • Identify the; • Experimental units. • Response variable. • Factors. • Levels of each factor. • Treatments. Your Turn:

Weight gain of Golden Torch Cacti. Researchers examined the effects of a hydrophilic polymer and irrigation regime on weight gain. For this study the researchers chose the hydrophilic polymer P4. P4 was either used or not used, and five irrigation regimes were employed: none, light, medium, heavy, and very heavy. • Experimental units • The cacti used in the study • Response variable • The weight gain of the cacti • Factors • Two factors – the hydrophilic polymer P4 and the irrigation regime • Levels • P4 has two levels; with and without. • Irrigation regime has five levels; none, light, medium, heavy, and very heavy. • Treatment • There are 10 different treatments, each a combination of a level of P4 and a level of irrigation regime. See next slide for treatments. Answer:

Schematic for the 10 Treatments in the Cactus Study Factors Levels Treatments

Manipulates the factor levels to create treatments. • Randomly assigns subjects to these treatments. • Compares the responses of the subject groups across treatment levels. Randomized, Comparative Experiment

Control • Randomize • Replicate • Block The Four Principles of Experimental Design

Control: • Good experimental design reduces variability by controlling the sources of variation. • We control sources of variation other than the factors we are testing by making conditions as similar as possible for all treatment groups. • Comparison is an important form of control. Every experiment must have at least two groups so the effect of a treatment can be compared with either the effect of a traditional treatment or the effect of no treatment at all. The Four Principles of Experimental Design

Randomize: • Subjects should be randomly divided into groups to avoid unintentional selection bias in constituting the groups, that is, to make the groups as similar as possible. • Randomization allows us to equalize the effects of unknown or uncontrollable sources of variation. • It does not eliminate the effects of these sources, but it spreads them out across the treatment levels so that we can see past them. • Without randomization, you do not have a valid experiment and will not be able to use the powerful methods of Statistics to draw conclusions from your study. The Four Principles of Experimental Design

Randomize: • One source of variation is confounding variables (will discuss later), variables that we did not think to measure but which can affect the response variable. • Randomization to treatment groups reduces bias by equalizing the effects of confounding variables. The Four Principles of Experimental Design

Replicate: • Repeat the experiment, applying the treatments to a number of subjects. • One or two subjects does not constitute an experiment. • The outcome of an experiment on a single subject is an anecdote, not data. • A sufficient number of subjects should be used to ensure that randomization creates groups that resemble each other closely and to increase the chances of detecting differences among the treatments when such differences actually exist. The Four Principles of Experimental Design

Example: Replication The outcome of an experiment on a single subject is an anecdote, not data.

Replicate: • When the experimental group is not a representative sample of the population of interest, we might want to replicate an entire experiment for different groups, in different situations, etc. • Replication of an entire experiment with the controlled sources of variation at different levels is an essential step in science. • The experiment should be designed in such a way that other researchers can replicate the results. The Four Principles of Experimental Design

Block: • Sometimes, attributes of the experimental units that we are not studying and that we can’t control may nevertheless affect the outcomes of an experiment. • If we group similar individuals together and then randomize within each of these blocks, we can remove much of the variability due to the difference among the blocks. • Note: Blocking is an important compromise between randomization and control, but, unlike the first three principles, is not required in an experimental design. The Four Principles of Experimental Design

It’s often helpful to diagram the procedure of an experiment. • The following diagram emphasizes the random allocation of subjects to treatment groups, the separate treatments applied to these groups, and the ultimate comparison of results: Diagrams of Experiments Flow Chart

Randomization produces groups of experimental units that should be similar in all respects before the treatments are applied. • Comparative design ensures that influences other than the experimental treatments operate equally on all groups. • Therefore, differences in the response variable must be due to the effects of the treatments. That is, the treatments not only are associated with the observed differences in the response but must actually cause them (cause and effect). Logic of Experimental Design

How large do the differences need to be to say that there is a difference in the treatments? • Differences that are larger than we’d get just from the randomization alone are called statistically significant. • We’ll talk more about statistical significance later on. For now, the important point is that a difference is statistically significant if we don’t believe that it’s likely to have occurred only by chance. Does the Difference Make a Difference?

Both experiments and sample surveys use randomization to get unbiased data. • But they do so in different ways and for different purposes: • Sample surveys try to estimate population parameters, so the sample needs to be as representative of the population as possible. • Experiments try to assess the effects of treatments, and experimental units are not always drawn randomly from a population. Experiments and Samples

Often, we want to compare a situation involving a specific treatment to the status quo situation. • A baseline (“business as usual”) measurement is called a control treatment, and the experimental units to whom it is applied is called the controlgroup. Control Treatments

When we know what treatment was assigned, it’s difficult not to let that knowledge influence our assessment of the response, even when we try to be careful. • In order to avoid the bias that might result from knowing what treatment was assigned, we use blinding. • There are two main classes of individuals who can affect the outcome of the experiment: • those who could influence the results (subjects, treatment administrators, technicians) • those who evaluate the results (judges, treating physicians, etc.) Blinding

When all individuals in either one of these classes are blinded, an experiment is said to be single-blind. • Single-Blind: An experiment is said to be single blind if the subjects of the experiment do not know which treatment group they have been assigned to or those who evaluate the results of the experiment do not know how subjects have been allocated to treatment groups. • When everyone in both classes is blinded, the experiment is called double-blind. • Double-Blind: An experiment is said to be double-blind if neither the subject nor the evaluators know how the subjects have been allocated to treatment groups. Blinding

Often simply applying any treatment can induce an improvement. • To separate out the effects of the treatment of interest, we can use a control treatment that mimics the treatment itself. • A “fake” treatment that looks just like the treatment being tested is called a placebo. • Placebos are the best way to blind subjects from knowing whether they are receiving the treatment or not. Placebos

The placebo effect occurs when taking the sham treatment results in a change in the response variable. • This highlights both the importance of effective blinding and the importance of comparing treatments with a control. • Placebo controls are so effective that you should use them as an essential tool for blinding whenever possible. Placebos

Completely Randomized Experiment (the ideal simple design) • Goal • State what you want to know. • Response • Specify the response variable. • Treatments • Specify the factor levels and the treatments. Designing an ExperimentStep-By-Step

Experimental units • Specify the experimental units. • Experimental Design • Observe the 4 principles of experimental design: • Control – any sources of variability you know of and can control. • Randomly – assign experimental units to treatments, to equalize the effects of unknown or uncontrollable sources of variation. Specify how the random numbers needed for randomization will be obtained. • Replicate – results by placing sufficient experimental units in each treatment group. • Blocking – if required, group similar individuals together. Designing an ExperimentStep-By-Step

Specify any other experiment details • Give enough details so that another experimenter could exactly replicate your experiment. • How to measure the response. Designing an ExperimentStep-By-Step

Researchers believe that diuretics may be as effective in reducing a person’s blood pressure as the conventional drug (drug A), which is much more expensive and has more unwanted side effects. Design a randomized comparative experiment to test this hypothesis. Randomized Comparative Experiment Example:

Explanatory Variable • Type of Medication Diuretic • Treatments Drug A • Response Variable • Change in Blood Pressure Randomized Comparative Experiment Example:

Can chest pain be relieved by drilling holes in the heart? Since 1980, surgeons have been using a laser procedure to drill holes in the heart. Many patients report a lasting and dramatic decease in chest pain. Is the relief due to the procedure or is it a placebo effect? • Design a randomized comparative experiment, using a group of 298 volunteers with severe chest pain, to test this procedures effectiveness. Randomized Comparative Experiment Your Turn:

are usually: • randomized. • comparative. • double-blind. • placebo-controlled. The Best Experiments…

Block Design • Matched Pairs Design Other Experimental Designs

When groups of experimental units are similar, it’s often a good idea to gather them together into blocks. • Blocking isolates the variability due to the differences between the blocks so that we can see the differences due to the treatments more clearly. • In effect, we are conducting two parallel experiments. We use blocks to reduce variability so that we can see the effect of the treatments. The blocks themselves are not treatments. • When randomization occurs only within the blocks, we call the design a randomized block design. Blocking